Ship Reliable

AI Features Faster

Most AI startups ship features that work in demos but fail in production. Edge cases, model inconsistencies, and manual QA costs user trust and slows feature deployments.

Book a Call

Most AI startups ship features that work in demos but fail in production. Edge cases, model inconsistencies, and manual QA costs user trust and slows feature deployments.

Book a Call

Your AI works in controlled tests but behaves unpredictably with real user inputs and edge cases.

Ad-hoc testing scripts and human review stall every release. You can't scale validation with your product.

Without systematic eval frameworks, you don't know what breaks until users complain.

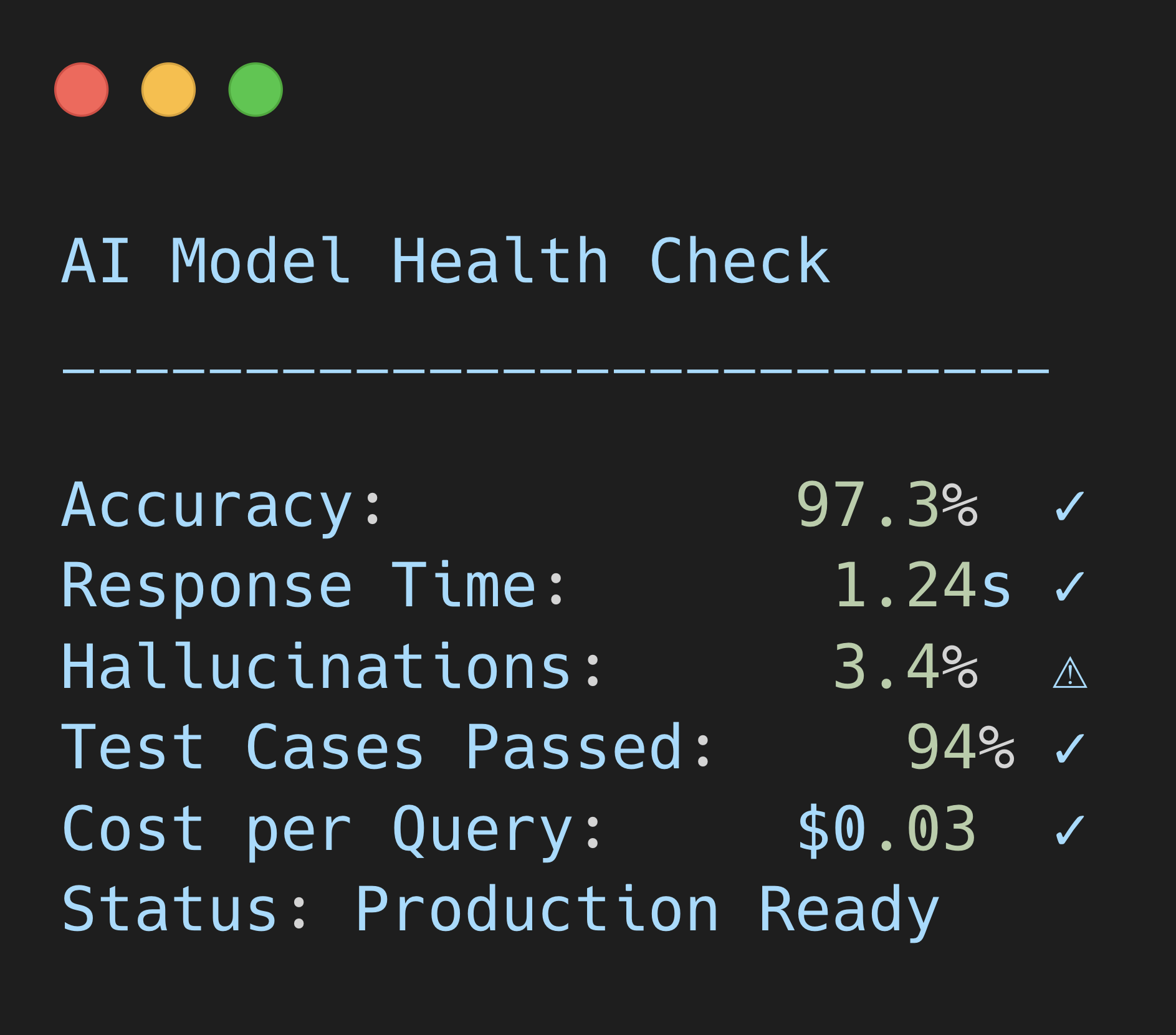

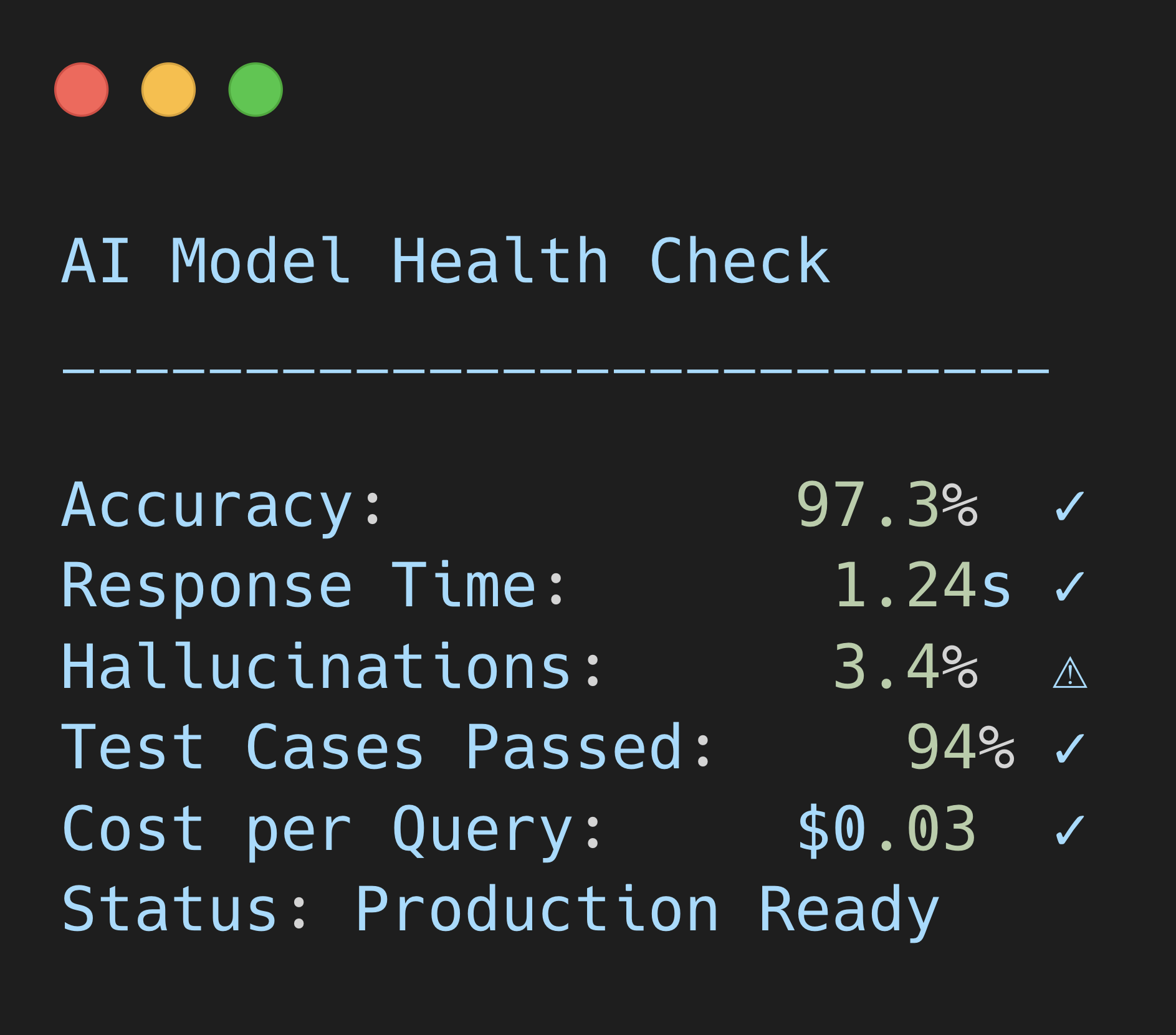

Automated testing that surfaces failure patterns before deployment. Know exactly what breaks and why.

Test the specific scenarios your users hit so that you catch failures before they do.

Move from "it works in our tests" to "it works reliably with real users." Catch inconsistencies early, ship faster.

Let's set up an eval workflow that actually works.

Book a Call